OpenAI Hit With First Defamation Suit Over ChatGPT “Hallucination”

Exposing the Real Dangers of AI

By Brian Shillhavy

Posted on June 11, 2022

They refer to this false information as AI “hallucination.”

Two recent news stories demonstrate just how foolish and dangerous it is to use programs like ChatGPT for real world applications by trusting in the output that ChatGPT provides.

Isaiah Poritz of Bloomberg Law reported this week that OpenAI, the company that produces ChatGPT, was hit with its first defamation lawsuit when it allegedly falsely accused a Georgia man of embezzling money.

OpenAI Hit With First Defamation Suit Over ChatGPT Hallucination

OpenAI LLC is facing a defamation lawsuit from a Georgia radio host who claimed the viral artificial intelligence program ChatGPT generated a false legal complaint accusing him of embezzling money.

The first-of-its-kind case comes as generative AI programs face heightened scrutiny over their ability to spread misinformation and “hallucinate” false outputs, including fake legal precedent.

Mark Walters said in his Georgia state court suit that the chatbot provided the false complaint to Fred Riehl, the editor-in-chief of the gun publication AmmoLand, who was reporting on a real life legal case playing out in Washington state.

Riehl asked ChatGPT to provide a summary of Second Amendment Foundation v. Ferguson, a case in Washington federal court accusing the state’s Attorney General Bob Ferguson of abusing his power by chilling the activities of the gun rights foundation.

However, ChatGPT allegedly provided a summary of the case to Riehl that said the Second Amendment Foundation’s founder Alan Gottlieb was suing Walters for “defrauding and embezzling funds” from the foundation as chief financial officer and treasurer.

“Every statement of fact in the summary pertaining to Walters is false,” according to the defamation suit, filed on June 5.

OpenAI didn’t immediately return a request for comment. (Full article.)

In another recent report, an attorney was actually foolish enough to use ChatGPT to research court cases in an actual lawsuit, and it found bogus lawsuits that did not even exist, and this was filed in a New York court of law! The judge, understandably, was outraged.

Lawyer uses ChatGPT in court and now ‘greatly regrets’ it

A New York attorney has been blasted for using ChatGPT for legal research as part of a lawsuit against a Columbian airline.

Steven Schwartz, an attorney with the New York law firm Levidow, Levidow & Oberman, was hired by Robert Mata to pursue an injury claim against Avianca Airlines.

Mata claims he sustained the injury from a serving cart during his flight with the airline in 2019, according to a May 28 report from CNN Business.

However, after a judge noticed inconsistencies and factual errors in the case documentation, Schwartz has admitted to using ChatGPT for his legal research, according to a May 24 sworn affidavit.

He claims that this was his first time using ChatGPT for legal research and “was unaware of the possibility that its content could be false.”

In an April 5 court filing, the judge presiding over the case stated:

“Six of the submitted cases appear to be bogus judicial decisions with bogus quotes and bogus internal citations.”

The judge further claimed that certain cases referenced in the submissions did not exist, and there was an instance where a docket number on a filing was mixed up with another court filing. (Full article.)

I came across an excellent article today by Aleksandar Svetski exposing the hype around Chat AI and detailing the real dangers to Chat AI, which he refers to as “The Great Homogenization,” where all the data on the Internet is controlled to a single narrative, something that I have been warning about as well.

This is a must-read article if you want to fully understand what is going on today with Chat AI, and how to fight back.

IF WE’RE NOT CAREFUL, THE AI REVOLUTION COULD BECOME THE ‘GREAT HOMOGENIZATION’

As artificial intelligence grows, so do attempts to control it. But, if we can differentiate the real risks from the fake risks, this technology could be used to encourage diversity of thought and ideas.

Excerpts:

The world is changing before our very eyes. Artificial intelligence (AI) is a paradigm-shifting technological breakthrough, but probably not for the reasons you might think or imagine.

You’ve probably heard something along the lines of, “Artificial general intelligence (AGI) is around the corner,” or, “Now that language is solved, the next step is conscious AI.”

Well… I’m here to tell you that those concepts are both red herrings. They are either the naive delusions of technologists who believe God is in the circuits, or the deliberate incitement of fear and hysteria by more malevolent people with ulterior motives.

I do not think AGI is a threat or that we have an “AI safety problem,” or that we’re around the corner from some singularity with machines.

But…

I do believe this technological paradigm shift poses a significant threat to humanity — which is in fact, about the only thing I can somewhat agree on with the mainstream — but for completely different reasons.

To learn what they are, let’s first try to understand what’s really happening here.

INTRODUCING… THE STOCHASTIC PARROT!

Technology is an amplifier. It makes the good better, and the bad worse.

Just as a hammer is technology that can be used to build a house or beat someone over the head, computers can be used to document ideas that change the world, or they can be used to operate central bank digital currencies (CDBCs) that enslave you into crazy, communist cat ladies working at the European Central Bank.

The same goes for AI. It is a tool. It is a technology. It is not a new lifeform, despite what the lonely nerds who are calling for progress to shut down so desperately want to believe.

What makes generative AI so interesting is not that it is sentient, but that it’s the first time in our history that we are “speaking” or communicating with something other than a human being, in a coherent fashion. The closest we’ve been to that before this point has been with… parrots.

Yes: parrots!

You can train a parrot to kind of talk and talk back, and you can kind of understand it, but because we know it’s not really a human and doesn’t really understand anything, we’re not so impressed.

But generative AI… well, that’s a different story. We’ve been acquainted with it for six months now (in the mainstream) and we have no real idea how it works under the hood. We type some words, and it responds like that annoying, politically-correct, midwit nerd who you know from class… or your average Netflix show.

In fact, you’ve probably even spoken with someone like this during support calls to Booking.com, or any other service in which you’ve had to dial in or web chat. As such, you’re immediately shocked by the responses.

“Holy shit,” you tell yourself. “This thing speaks like a real person!”

The English is immaculate. No spelling mistakes. Sentences make sense. It is not only grammatically accurate, but semantically so, too.

Holy shit! It must be alive!

Little do you realize that you are speaking to a highly-sophisticated, stochastic parrot. As it turns out, language is a little more rules-based than what we all thought, and probability engines can actually do an excellent job of emulating intelligence through the frame or conduit of language.

The law of large numbers strikes again, and math achieves another victory!

But… what does this mean? What the hell is my point?

That this is not useful? That it’s proof it’s not a path to AGI?

Not necessarily, on both counts.

There is lots of utility in such a tool. In fact, the greatest utility probably lies in its application as “MOT,” or “Midwit Obsolescence Technology.” Woke journalists and the countless “content creators” who have for years been talking a lot but saying nothing, are now like dinosaurs watching the comet incinerate everything around them. It’s a beautiful thing. Life wins again.

Of course, these tools are also great for ideating, coding faster, doing some high-level learning, etc.

But from an AGI and consciousness standpoint, who knows? There mayyyyyyyyyyy be a pathway there, but my spidey sense tells me we’re way off, so I’m not holding my breath. I think consciousness is so much more complex, and to think we’ve conjured it up with probability machines is some strange blend of ignorant, arrogant, naive and… well… empty.

So, what the hell is my problem and what’s the risk?

ENTER THE AGE OF THE LUI

Remember what I said about tools.

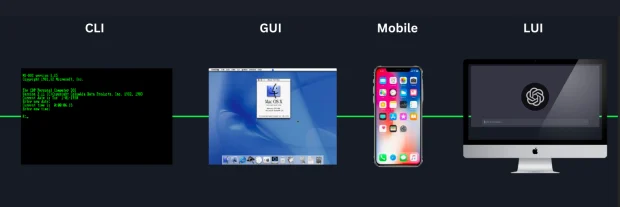

Computers are arguably the most powerful tool mankind has built. And computers have gone through the following evolution:

- Punch cards

- Command line

- Graphical user interface, i.e., point and click

- Mobile, i.e., thumbs and tapping

And now, we’re moving into the age of the LUI, or “Language User Interface.”

This is the big paradigm shift. It’s not AGI, but LUI. Moving forward, every app we interact with will have a conversational interface, and we will no longer be limited by the bandwidth of how fast our fingers can tap on keys or screens.

Speaking “language” is orders of magnitude faster than typing and tapping. Thinking is probably another level higher, but I’m not putting any electrodes into my head anytime soon. In fact, LUIs probably obsolete the need for Neuralink-type tech because the risks associated with implanting chips into your brain will outweigh any marginal benefit over just speaking.

In any case, this decade we will go from tapping on graphical user interfaces, to talking to our apps.

And therein lies the danger.

In the same way Google today determines what we see in searches, and Twitter, Facebook, Tik Tok and Instagram all “feed us” through their feeds; generative AI will tomorrow determine the answers to every question we have.

The screen not only becomes the lens through which you ingest everything about the world. The screen becomes your model of the world.

Mark Bisone wrote a fantastic article about this recently, which I urge you to read:

“The problem of ‘screens’ is actually a very old one. In many ways it goes back to Plato’s cave, and perhaps is so deeply embedded in the human condition that it precedes written languages. That’s because when we talk about a screen, we’re really talking about the transmission of an illusory model in an editorialized form.

“The trick works like this: You are presented with the image of a thing (and these days, with the sound of it), which its presenter either explicitly tells you or strongly implies is a window to the Real. The shadow and the form are the same, in other words, and the former is to be trusted as much as any fragment of reality that you can directly observe with your sensory organs.”

And, for those thinking that “this won’t happen for a while,” well here are the bumbling fools making a good attempt at it.

THE ‘GREAT HOMOGENIZATION’

Imagine every question you ask, every image you request, every video you conjure up, every bit of data you seek, being returned in such a way that is deemed “safe,” “responsible” or “acceptable” by some faceless “safety police.”

Imagine every bit of information you consume has been transformed into some lukewarm, middle version of the truth, that every opinion you ask for is not really an opinion or a viewpoint, but some inoffensive, apologetic response that doesn’t actually tell you anything (this is the benign, annoying version) or worse, is some ideology wrapped in a response so that everything you know becomes some variation of what the manufacturers of said “safe AI” want you to think and know.

Imagine you had modern Disney characters, like those clowns from “The Eternals” movie, as your ever-present intellectual assistants. It would make you “dumb squared.”

“The UnCommunist Manifesto” outlined the utopian communist dream as the grand homogenization of man:

If only everyone were a series of numbers on a spreadsheet, or automatons with the same opinion, it would be so much easier to have paradise on earth. You could ration out just enough for everyone, and then we’d be all equally miserable proletariats.

This is like George Orwell’s thought police crossed with “Inception,” because every question you had would be perfectly captured and monitored, and every response from the AI could incept an ideology in your mind. In fact, when you think about it, that’s what information does. It plants seeds in your mind.

This is why you need a diverse set of ideas in the minds of men! You want a flourishing rainforest in your mind, not some mono-crop field of wheat, with deteriorated soil, that is susceptible to weather and insects, and completely dependent on Monsanto (or Open AI or Pfizer) for its survival. You want your mind to flourish and for that you need idea-versity.

This was the promise of the internet. A place where anyone can say anything. The internet has been a force for good, but it is under attack. Whether that’s been the de-anonymization of social profiles like those on Twitter and Facebook, and the creeping KYC across all sorts of online platforms, through to the algorithmic vomit that is spewed forth from the platforms themselves. We tasted that in all its glory from 2020. And it seems to be only getting worse.

The push by WEF-like organizations to institute KYC for online identities, and tie it to a CBDC and your iris is one alternative, but it’s a bit overt and explicit. After the pushback on medical experimentation of late, such a move may be harder to pull off. An easier move could be to allow LUIs to take over (as they will, because they’re a superior user experience) and in the meantime create an “AI safety council” that will institute “safety” filters on all major large language models (LLMs).

Don’t believe me? Our G7 overlords are discussing it already.

Today, the web is still made up of webpages, and if you’re curious enough, you can find the deep, dark corners and crevices of dissidence. You can still surf the web. Mostly. But when everything becomes accessible only through these models, you’re not surfing anything anymore. You’re simply being given a synthesis of a response that has been run through all the necessary filters and censors.

There will probably be a sprinkle of truth somewhere in there, but it will be wrapped up in so much “safety” that 99.9% of people won’t hear or know of it. The truth will become that which the model says it is.

I’m not sure what happens to much of the internet when discoverability of information fundamentally transforms. I can imagine that, as most applications transition to some form of language interface, it’s going to be very hard to find things that the “portal” you’re using doesn’t deem safe or approved.

One could, of course, make the argument that in the same way you need the tenacity and curiosity to find the dissident crevices on the web, you’ll need to learn to prompt and hack your way into better answers on these platforms.

And that may be true, but it seems to me that for each time you find something “unsafe,” the route shall be patched or blocked.

You could then argue that “this could backfire on them, by diminishing the utility of the tool.”

And once again, I would probably agree. In a free market, such stupidity would make way for better tools.

But of course, the free market is becoming a thing of the past. What we are seeing with these hysterical attempts to push for “safety” is that they are either knowingly or unknowingly paving the way for squashing possible alternatives.

In creating “safety” committees that “regulate” these platforms (read: regulate speech), new models that are not run through such “safety or toxicity filters” will not be available for consumer usage, or they may be made illegal, or hard to discover. How many people still use Tor? Or DuckDuckGo?

And if you think this isn’t happening, here’s some information on the current toxicity filters that most LLMs already plug into. It’s only a matter of time before such filters become like KYC mandates on financial applications. A new compliance appendage, strapped onto language models like tits on a bull.

Whatever the counter-argument to this homogenization attempt, both actually support my point that we need to build alternatives, and we need to begin that process now.

For those who still tend to believe that AGI is around the corner and that LLMs are a significant step in that direction, by all means, you’re free to believe what you want, but that doesn’t negate the point of this essay.

If language is the new “screen” and all the language we see or hear must be run through approved filters, the information we consume, the way we learn, the very thoughts we have, will all be narrowed into a very small Overton window.

I think that’s a massive risk for humanity.

We’ve become dumb enough with social media algorithms serving us what the platforms think we should know. And when they wanted to turn on the hysteria, it was easy. Language user interfaces are social media times 100.

Imagine what they can do with that, the next time a so-called “crisis” hits?

It won’t be pretty.

The marketplace of ideas is necessary to a healthy and functional society. That’s what I want.

Their narrowing of thought won’t work long term, because it’s anti-life. In the end, it will fail, just like every other attempt to bottle up truth and ignore it. But each attempt comes with unnecessary damage, pain, loss and catastrophe. That’s what I am trying to avoid and help ring the bell for.

WHAT TO DO ABOUT ALL THIS?

If we’re not proactive here, this whole AI revolution could become the “great homogenization.” To avoid that, we have to do two main things:

- Push back against the “AI safety” narratives: These might look like safety committees on the surface, but when you dig a little deeper, you realize they are speech and thought regulators.

- Build alternatives, now: Build many and open source them. The sooner we do this, and the sooner they can run more locally, the better chance we have to avoid a world in which everything trends toward homogenization.

If we do this, we can have a world with real diversity — not the woke kind of bullshit. I mean diversity of thought, diversity of ideas, diversity of viewpoints and a true marketplace of ideas.

An idea-versity. What the original promise of the internet was. And not bound by the low bandwidth of typing and tapping.

Brian Shillhavy